Read more

The post How Can Sentiment Analysis Be Used to Improve Customer Experience? appeared first on Interaction Metrics.

]]>Sentiment analysis reveals the emotions your customers feel—but knowing how they feel is only useful if you know why they feel the emotion in the first place.

If you want to improve customer experience, you need more than just emotional data.

You need to know what customers are actually saying so you can identify key themes, uncover root causes, and take meaningful action.

That’s where Interaction Metrics steps in. We provide comprehensive text analysis services that include sentiment analysis to deliver actionable insights you can use to improve the customer experience.

If you’re ready to boost overall customer satisfaction, retention, and customer loyalty, you can use customer sentiment analytics to transform your approach to the customer experience, or work with a partner like Interaction Metrics that can do it for you.

Read on to discover 10 actionable ways you can use customer sentiment analysis to improve the customer experience and improve your company’s bottom line.

What Is Customer Sentiment Analysis?

Customer sentiment analysis involves evaluating customer data to understand emotional tone—whether it’s positive, negative, or neutral. It relies on natural language processing (NLP) and machine learning to classify customer feedback.

For example:

- “The checkout process was seamless!” → Positive sentiment.

- “This app keeps crashing.” → Negative sentiment.

- “It’s okay.” → Neutral sentiment.

Sentiment analysis is useful for capturing the emotional tone of customer interactions across various channels, including:

- Online reviews: Platforms like Yelp and Google Reviews reveal emotional trends in customer comments.

- Customer satisfaction surveys: Open-ended responses paired with sentiment analysis show why customers gave certain scores.

- Emails: Sentiment analysis tools scan emails for emotional cues, identifying trends like frustration or satisfaction that might not be explicitly stated, enabling timely customer support interventions.

- Customer support tickets: Tools like Zendesk and Freshdesk highlight recurring customer frustrations.

- Customer service chats: Real-time sentiment analysis helps agents adjust responses based on customer mood.

But sentiment is rarely just “positive” or “negative.” It’s more nuanced than that.

- Frustrated is different from angry—but both are negative.

- Happy is different from enthusiastic—but both are positive.

- A customer who is satisfied isn’t the same as one who’s so impressed they would recommend your company to others.

That’s why deeper sentiment classification matters.

Why Sentiment Alone Isn’t Enough

A customer might be angry, but are they angry about slow shipping, poor customer service, or product quality issues? Without knowing what they’re talking about, you can’t take effective action.

Consider this scenario:

- Sentiment analysis reveals that customer satisfaction scores are dropping.

- Text analysis shows that most of the negative comments are about a glitch in the latest app update.

Without text analysis, you’d know people were unhappy, but you wouldn’t know why. Text analysis uncovers the actual pain points, so you can fix them at the source.

How Does Sentiment Analysis Impact the Customer Experience?

Customers tend to express emotions clearly, but they aren’t always so direct when it comes to explaining why they feel what they feel.

Sentiment analysis data alone doesn’t improve the customer experience. It only reveals how they feel about your brand, product, or service. To get the most out of your data, you must dig deeper so you can connect customer emotions to specific experiences.

Here’s a Real-World Example:

Imagine a retail company noticed a spike in negative sentiment. Sentiment analysis shows customers are frustrated, but text analysis reveals why: most complaints are about shipping delays with international orders.

From there, the company knew where to focus on improving international logistics rather than overhauling the entire shipping process.

To improve the customer experience effectively, you need to start with content:

- Analyze the text – Identify key themes and patterns in customer feedback.

- Overlay sentiment analysis – Determine how strongly customers feel about each issue—and how those feelings break down into specific emotional tones.

- Prioritize strategically – Focus on the issues that generate the most intense customer reactions—positive or negative.

Use sentiment analysis as a “jumping-off point” to dig into customer data. That way, with text analysis, you’ll pinpoint areas that need customer experience improvement.

How Customer Sentiment Analysis Helps Your Company

Once you understand the drivers behind customer sentiment, you can use those insights to create meaningful change across the entire customer experience. And by consistently acting on sentiment and textual data, your business gains massive advantages, such as:

- Increased customer satisfaction, because you can recognize and resolve the specific frustrations impacting your customers.

- Reduced churn and increased retention, because you can proactively address dissatisfaction before customers decide to leave.

- Strengthened brand loyalty, by identifying satisfied customers and encouraging them to become active promoters of your brand.

- Better alignment of products and services with customer expectations, allowing you to prioritize improvements that directly meet customer needs.

- Improved brand reputation, because proactively engaging with customer sentiment helps you prevent negative experiences from escalating into crises.

- More informed marketing strategies and targeted campaigns, thanks to a clear understanding of customers’ emotions and expectations.

In a Nutshell: The ability to analyze narrative customer feedback allows your team to resolve customer issues before they escalate, protecting your brand and creating satisfied customers.

10 Ways Sentiment Analysis Improves the Customer Experience

Once your team is on board with how sentiment and text analysis reveals customer insights, the next step is putting those insights into action.

Here are ten practical ways you can apply these analyses to your customer experience program.

1. Personalize Customer Experiences

Increasingly, customers expect personalized interactions at every turn. Customer sentiment analytics help you meet these expectations by revealing exactly how individual customers feel after different interactions with your company.

Using your sentiment analysis data, you can tailor communication, support, and promotions specifically to each customer’s emotional state.

For example, you might offer a special promotion or discount to a dissatisfied customer, turning a negative experience into a positive one. On the other hand, you can reward satisfied customers with exclusive offers to keep them happy, engaged, and loyal.

After providing these personalized promotions, measure customer sentiment again. An increase in positive sentiment confirms that your tailored approach is genuinely improving the customer experience.

2. Optimize the Customer Journey

Customers expect seamless experiences across every touchpoint with your business. Sentiment analysis can map customer opinions related to onboarding, purchasing, customer support, renewal, and more.

If customers frequently complain about unclear instructions during onboarding, for example, you can refine the onboarding process, reducing friction.

Then, you can regularly monitor customer satisfaction metrics to confirm the effectiveness of your actions.

3. Improve Live Customer Support

With sentiment analysis, you can quickly detect patterns in customer opinions after interacting with your live support team. Once you’ve pinpointed recurring themes within your customer support interactions, you can decide how to address them.

For instance, recurring complaints about slow responses or ineffective solutions show that your customers expect faster service and clearer communication.

On the other hand, customers who express positive sentiment after working with support can reveal which aspects of your service to lean into and systematize.

Use your insights to develop targeted trainings for your support teams, and you’ll quickly see improvements in both customer and employee satisfaction.

4. Simplify Self-Service Experiences

Sentiment analysis can pinpoint customer frustrations with your self-service channels, like FAQ pages, knowledge bases, or automated chatbots.

Looking for patterns of negative sentiment around these channels lets you immediately adress areas where customers get stuck or confused. You’ll likely discover the need for clearer content, better navigation, or chatbot enhancements.

All of these tools enable customers to resolve their issues faster and more independently. Optimizing them can lead to a massive improvement in your customer’s overall experience.

5. Align Your Messaging With Customer Desires

Sentiment analysis can optimize your marketing campaigns by tracking customer reactions to ads or promotional messaging in the comment section of your posts.

For example, if your sentiment analysis detects negative reactions to a specific ad campaign, you can immediately adjust or halt the messaging to preserve your brand reputation and save on ad spend.

Conversely, highly positive reactions indicate messaging that resonates, so you can amplify successful campaigns for improved customer engagement.

6. Streamline Customer Onboarding

Customers often express frustration or confusion when onboarding processes are complicated. Sentiment analysis helps identify these moments of friction early.

By analyzing feedback from new customers, you can simplify the onboarding process, clarify confusing instructions, or proactively provide support resources.

This means customers experience fewer hurdles from the start. The result is increased satisfaction and smoother interactions with your company.

7. Improve Employee Training and Engagement

Customer sentiment often reflects directly on the quality of employee interactions. Using sentiment analysis to provide targeted employee feedback can enhance training effectiveness and boost employee engagement.

If sentiment analysis shows customers repeatedly frustrated by service interactions, provide targeted training to customer-facing teams, emphasizing specific language and empathy techniques.

Similarly, you can highlight positive customer feedback during training sessions to reinforce desired behaviors and improve overall service quality.

8. Refine Product Development

Collecting and analyzing sentiment through product-related customer feedback often tells product teams exactly what customers want to see in the next release (or just as importantly, what users don’t want to see).

Sentiment analytics can highlight subtle but significant issues within your product experience.

For example, ongoing negative sentiment about complicated navigation or confusing pricing tends to surface clearly in sentiment reports.

Product managers can use these insights to prioritize enhancements that directly align with customer insights and customer needs.

Incorporate sentiment analysis into regular product review cycles to make sure that your latest updates and new releases align with what customers expect. If sentiment analysis shows repeated complaints about product complexity, simplify or redesign the experience to align with customer expectations.

9. Optimize Billing and Payment Processes

Sentiment analysis often highlights customer frustrations with billing and payment processes.

If negative customer sentiment repeatedly emerges around confusing invoices or payment errors, you can prioritize streamlining these areas.

Clearer invoices or simpler payment methods directly improve customer satisfaction and reduce support inquiries.

10. Monitor and Learn From Competitor Sentiment

Sentiment analysis isn’t just for your own customer data. You can also apply it to analyze public opinions about your competitors.

Once identified, you can use them to implement competitor practices that lead to increased customer satisfaction.

You can also use what you learn about competitors to capitalize on your differentiators. If sentiment analysis indicates frequent complaints about a competitor’s slow customer service, you can proactively highlight your rapid response time as a competitive advantage.

Aligning your strengths with competitors’ weaknesses lets you enhance your value proposition and deliver a superior customer experience.

How to Turn Customer Sentiment Insights into Action

The actionable methods outlined above are practical starting points to improve the customer experience. But to consistently drive measurable improvements, you need a structured approach to applying sentiment insights.

All of the methods above use the same repeatable framework to put sentiment analysis into action.

Here is the framework.

1. Categorize Insights Clearly

Organize sentiment data clearly by department, such as marketing, customer support, product development, and sales. This makes it easy for each team to take direct ownership of sentiment improvements.

Once your data is sorted by department, group customer comments into clear sentiment categories, for example, they might be:

- Frustrated

- Neutral

- Mixed

- Satisfied

- Enthusiastic

Then, review the comments within each category of sentiment. The goal is to identify recurring themes and understand why customers feel the way they do.

For example, if your sentiment analysis identifies consistent frustration about slow customer support responses, you might discover themes such as delayed follow-ups, lack of clarity, or insufficient product knowledge.

Similarly, if positive sentiment emerges around your sales team, you might uncover themes related to friendly interactions, thorough product explanations, or prompt outreach.

2. Communicate Specific Actions

Once you’ve sorted your feedback by department and identified themes that explain why customers feel the way they do, you must clearly communicate sentiment insights and identified themes to the relevant teams.

When sharing themes, avoid making vague recommendations.

Instead, provide concrete steps each team can take to improve the customer experience.

For example, if customers express confusion during onboarding, clearly instruct your onboarding team on exactly which parts of the process need clarification.

Here’s an example of actionable feedback vs. poor feedback:

- Actionable Feedback: “Account setup is too complicated for new users who aren’t familiar with our platform. Many users complain that we’re asking for too much information, and the signup process takes far too long. We need to make sure we’re only requesting items we really need to save time on setup.”

- Poor Feedback: “Customers are frustrated with the setup process. Fix it.”

3. Make Changes and Measure Results

Once teams take action, measure the impact to confirm effectiveness.

Track sentiment scores before and after interventions to be sure that changes are genuinely improving customer experiences.

For instance, after refining your onboarding process, monitor sentiment closely. If sentiment scores improve significantly, you’ll have concrete evidence that your actions are making a difference.

With this 3-step framework in mind, you can apply your own creativity and identify many different ways to improve the customer experience.

Integrate Sentiment Analysis into Your Long-Term Business Strategy With Interaction Metrics

Sentiment analysis shouldn’t be an occasional project—it needs to be central to your CX strategy. Regularly share insights across departments to align improvements with customer expectations.

Most sentiment analysis tools stop at identifying emotional tone.

Here’s how:

- We identify the key themes driving customer sentiment.

- We uncover hidden issues that sentiment analysis alone would miss.

- We use a combination of AI and expert human review to avoid misclassification and keep your insights honest.

Our comprehensive text analysis services leverage AI tools together with expert human review to reveal insights that are as accurate as they are actionable.

Our approach to text analysis includes sentiment analysis that identifies common themes for insights you can use to take action. With us, you avoid common pitfalls like misclassified sarcasm, undetected nuances, or overlooked mixed emotions—issues that purely automated sentiment analysis tools often miss.

Contact Interaction Metrics today to start measuring and monitoring your customer sentiment score and improve the customer experience.

Frequently Asked Questions

What’s involved in conducting text-based sentiment analysis?

Analyzing customer sentiment involves collecting customer sentiment data from sources like survey data, comments on social media posts, online reviews, and more. Using tools powered by natural language processing and machine learning, businesses classify customer comments into clear sentiment categories (positive, negative, or neutral) to generate actionable insights.

What is the role of natural language processing in sentiment customer sentiment analytics?

Natural language processing (NLP) allows sentiment analysis tools to interpret human language accurately, recognizing context, tone, and emotions in customer interactions.

Why should businesses use social media monitoring and online reviews for sentiment analysis?

Social media comments and online reviews quickly capture real-time shifts in user sentiment and brand perception. They help businesses identify emerging issues, respond promptly to public negative feedback, and proactively manage brand reputation.

How can sentiment analysis improve customer interactions?

By understanding customer sentiment, businesses can tailor their communication, improve interactions, and create more positive experiences. Customer sentiment analytics helps personalize services, increasing engagement and loyalty.

How can customer support teams leverage sentiment analysis?

Customer support teams use sentiment analysis to detect frustration or dissatisfaction early. This allows teams to respond faster, resolve issues proactively, and turn negative interactions into opportunities for increased customer satisfaction.

Can I rely on AI software and machine learning for 100% accurate sentiment analysis?

No. In order to get an accurate read on overall sentiment, at least some of your analysis needs to be conducted manually. If you rely solely on AI, you may inaccurately classify responses. This is because AI often overlooks subtle differences in responses that would be obvious to a human (e.g. “It’s great” compared to “It’s great, I guess.”).

Can sentiment analysis directly impact business outcomes?

Absolutely. Sentiment analysis provides real-time insight into how customers feel about your brand. By quickly addressing issues and enhancing customer satisfaction, businesses improve retention, reputation, and overall performance—leading directly to sustainable business growth. Contact Interaction Metrics to learn how we can help you implement sentiment analysis and start improving outcomes for your company today.

=================================

Let’s discuss how to make your sentiment analysis program a success.

=================================

The post How Can Sentiment Analysis Be Used to Improve Customer Experience? appeared first on Interaction Metrics.

]]>Read more

The post Analyzing Open Ended Survey Questions—Is AI Your Solution? appeared first on Interaction Metrics.

]]>Analyzing open ended survey questions is the single most fruitful method for getting meaningful, honest feedback. Employees and customers can express themselves through text and say what’s really on their minds in a way that’s impossible through structured rating questions.

Open-ends are your ‘gold’ but extracting the gold is challenging, which is why companies look to AI to solve the problem. But is AI your best solution? If so, when and where should it be applied? Are there any downsides? This article answers these questions.

Jump to: How Do ChatGPT, Gemini, and Claude Stack Up for Text Analysis?

Open-Ended vs. Closed-Ended Questions

First, let’s get clear about the differences between open-ended and closed questions.

Examples of closed-ended questions are:

- The Net Promoter question

- Rating questions

- Binary (Yes/No) questions

These types of questions are useful when all possible responses can be captured in a short, simple list.

But open-ends are a better fit when you’re asking about topics that aren’t straightforward or where responses might vary widely. Because respondents aren’t limited by predetermined answers, they can express their most authentic and wide-ranging opinions. This in turn, allows you to uncover answers to questions you might not have even thought to ask about in the ‘main’ parts of your surveys.

Some examples of open-ended questions include:

- What is <Competitor123> is doing better than <CompanyABC>?

- What was the best part of your experience with <CompanyABC>?

- If a colleague asked you about <CompanyABC’s> products, what would you say?

The Challenges of Analyzing Open Ended Survey Questions

Open-ended survey answers are rich with valuable information.

But analyzing the results of your open ended survey questions requires you to synthesize all of that unstructured information into a coherent form.

Simply reading through comments isn’t practical. With so many responses, it’s nearly impossible to process and glean actionable insights. Moreover, our brains are limited by Miller’s law, which states that working memory can only handle about seven items at once. This makes it easy to overlook subtle patterns and emerging themes in the data.

Even worse, you won’t be able to quantify the emergent themes in the comments, so you won’t have a compelling report. After all, business audiences need more than stories; they need metrics and clear priorities.

Some researchers suggest reading only a few comments to get the general gist of things.

Others turn to word clouds because they’re the fastest and easiest way to give shape (no matter how spurious) to your open ends: just input your comments to see which words occur most often.

But of course, word clouds don’t find meaningful themes, and they don’t show what actions to take. For example, perhaps “product” occurs most often in your word cloud. But that doesn’t tell you whether customers are pleased or displeased with your product or how to improve the product experience.

Beyond Word Clouds

To make sense of open-ended survey data, you need Text Analysis, which, when conducted by experts (that’s us!) uncovers insights you can use. Here are some of the ways Text Analysis adds value:

Sentiment:

Determines whether responses are positive, negative, neutral, or mixed. For example, a phrase like “I love this product” signals positive sentiment, while “I’m disappointed with the service” points to negative sentiment. Whether you are using AI or human researchers, this is by far the easiest aspect of comments to analyze — that said, because of mixed sentiment, AI often gets this wrong, and the fact is that having conflicting feelings about a company (or its products or services) is very common.

Effort:

Identifies how easy or difficult it is for users to achieve their goals with your product or service. Comments mentioning “frustrating” or “easy to use” highlight areas where customers struggle or succeed. Reducing customer effort can significantly improve satisfaction and loyalty.

Recurring Themes:

Highlight broader patterns across responses, allowing you to address problems or amplify areas where you’re strong. TIP: When analyzing open ended survey questions, start by considering which department each comment belongs to, and keep in mind that often within a single comment, multiple departments may be involved.

Text Tagging: The Social Science Technique

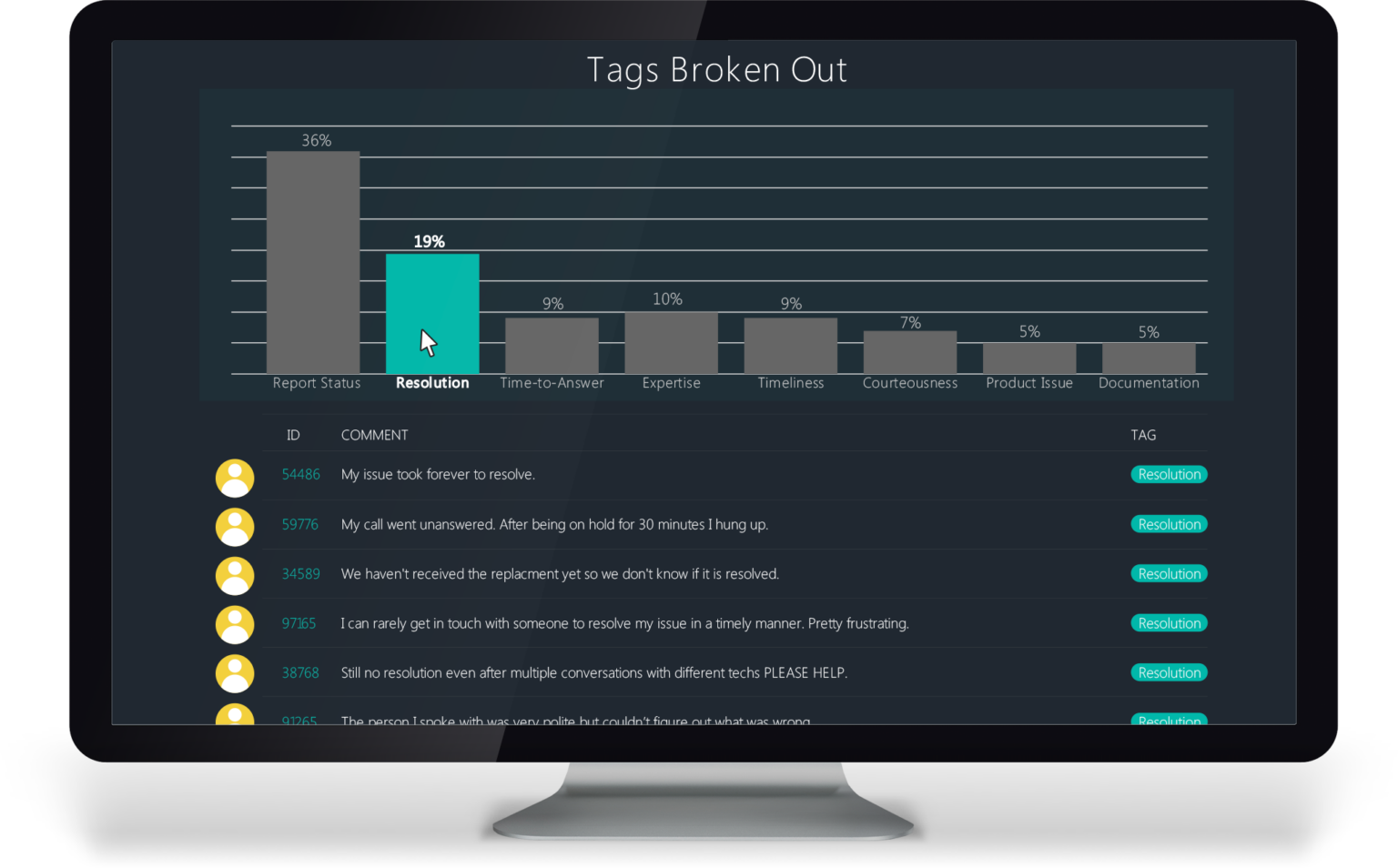

Text tagging categorizes comments using a system of definitions and protocols.

This technique is used by Sociologists, Anthropologists, and Psychologists. And it’s useful for analyzing the Customer Experience too!

Often, tagging is the most accurate and efficient method for extracting meaning from survey verbatims.

At a high level, here’s how the tagging process works:

Several analysts collaborate to build a tagging framework, cross-checking each other’s work until the framework captures the meaning of the text.

Then, the framework is used to classify customers’ comments, with each classification becoming progressively narrower and more specific.

Once all the tagging is in place, the tags are quantified to reveal themes in rank-order priority. Quantification is critical because it enables the emergent themes to be prioritized. In addition, quantification allows text themes to be correlated with outcome metrics like Net Promoter and Customer Satisfaction Scores.

For text tagging to be effective, it’s critical to hire researchers who are experienced with this technique and who are familiar with analyzing customer experience nuances–because nothing about customers’ comments is ever straightforward.

Text Analysis: The Details

Customers’ open-ended responses are often written informally, and it requires some work to assign them a category before they can be labeled accurately.

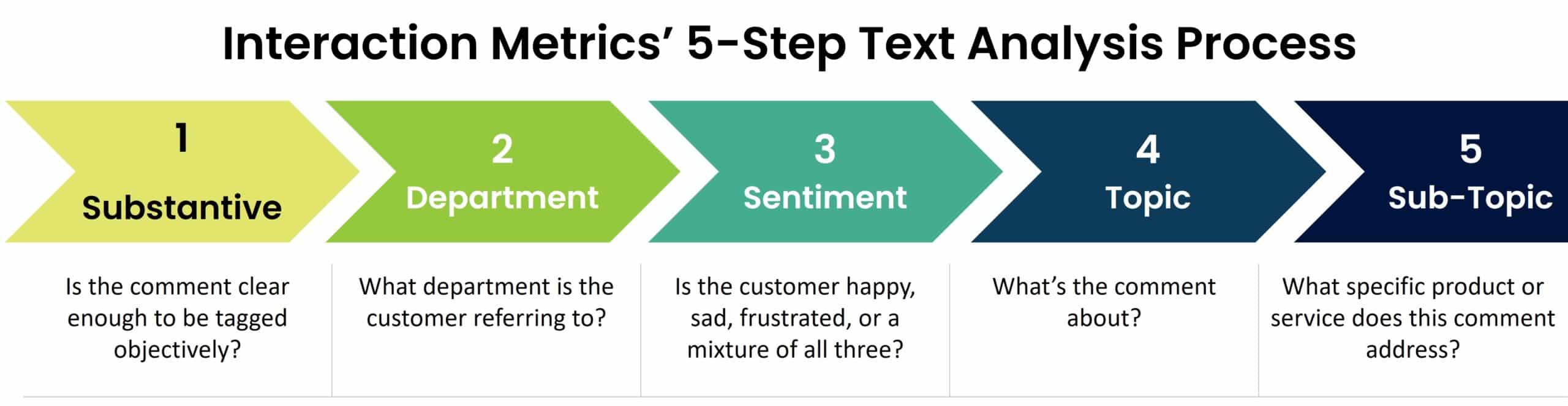

This requires a five-step process:

- Substantive: Is the comment clear enough to be tagged objectively?

- Department: What department is the customer referring to? For instance that could be Repairs, Websites, Customer Support, Returns, Marketing, Warranties, etc..

- Sentiment: Is the customer happy? Exuberant? Frustrated? Angry? Etc.

- Topic: What’s the comment about? This is the most important part of the classification process, but it’s meaningless without going through the first four steps.

- Sub-Topic: What specific product or service does this comment address?

Most emphatically, the point of our 5-step process is to eliminate subjectivity. When multiple researchers independently arrive at the same conclusions, you know the findings are solid and that you can use this information to set new tactical or strategic directions.

Why Can’t You Just Use AI For This?

You can use AI when analyzing open ended survey questions, and that’s often what companies do, especially when they have large, continuously updated datasets. However, for AI to be accurate, no matter what kind of company you are or what size, for accurate results, you’ll need to start by doing your own Text Analysis using human researchers operating not from an LLM but from general intelligence. Then, once you’ve set the framework up, you’ll need to audit the results your AI is giving you continuously.

Remember, currently, what is called AI is really a large language model (LLM). AI hasn’t yet achieved anything like intelligence or consciousness. Maybe in two years? Perhaps in 20? No one really knows. And without general intelligence, not only are hallucinations possible, but nuances may be entirely overlooked.

Here’s what your auditors need to pay close attention to:

- When sentiment is mixed, AI often gets it wrong by overfocusing on the first sentiment.

- While content extraction is improving, AI extracts meaning per question—but customers tend to repeat themselves among open-ended questions.

- Most AI tools write a summary of the themes, but the best action happens at the customer level.

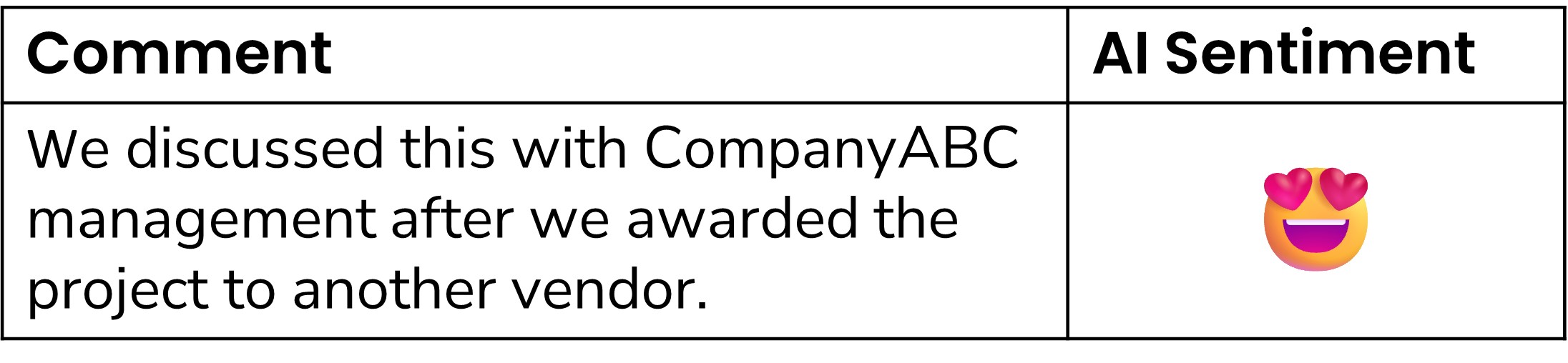

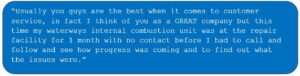

TIP: Sentiment analysis is the easiest part of verbatim analysis for humans, but we’ve seen AI algorithms with insufficient training incorrectly tag sentiment more than 70% of the time when sentiment is mixed or word choices are confusing. Look how difficult it can be to get sentiment right using AI:

In this example, it’s easy for humans to tell that the sentiment is negative—CompanyABC lost the project to another vendor. However, many AI tools we’ve tested mark it as a positive comment because of the word “award.”

Emotions are complicated, and comments have many cues that AI struggles to understand. And yet, sentiment analysis is only one piece of the puzzle and not the most actionable piece. It’s the themes — the content — in which the power of comments surfaces.

Here’s another example of two customer comments that humans innately understand are different but that AI is likely to treat as the same: “it was okay.” VS. “It was okay, I guess.”

Now consider another issue with AI.

Most AI programs that work at the customer level (not highlighted summaries like what you get from Zoom, Sonix, etc. ) are set up to analyze the answers to each of your open ends. So, if customers repeat themselves, which they often do, AI might overemphasize certain topics—so you’ll wind up with an over-indexing of those themes.

Humans, on the other hand, intuitively recognize when a customer is reinforcing a single point across multiple questions rather than introducing entirely new topics, which helps avoid misinterpreting the data.

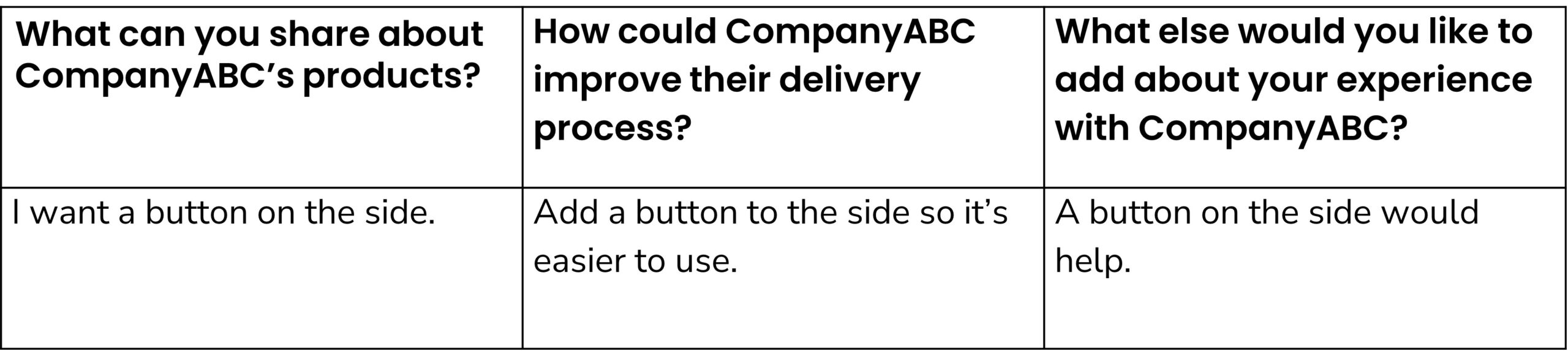

For example, consider this customer’s answers to the open-ended questions in CompanyABC’s survey:

AI tools might consider that the side button is more important than it truly is because it was mentioned three times—but in reality, the same customer repeats the side button comment 3 times, so it’s not as important to the group overall, but rather to this individual customer.

More about ChatGPT etc. is below, but the most common LLMS are designed simply to give you a narrative summary of ALL the comments (or answers to interview questions); they don’t provide customer-by-customer summaries, at least at this time.

We use many AI tools. So, we’re not down on AI; we just want you to understand its limitations so you know how to use it effectively.

How Do ChatGPT, Gemini, and Claude Stack Up for Text Analysis?

All three AI tools—ChatGPT, Gemini, and Claude—are capable of analyzing open-ended survey questions and other types of unstructured data. However, each has its unique strengths, limitations, and approaches to organizing insights.

We tested the advanced version of each of the three tools with a real customer dataset to see how they extract themes and summarize the data, and this is what we found. (We will continue to test the three main LLMS using different datasets and will update our findings frequently.)

Theme Extraction and Organization

Each tool derives themes and organizes them differently, often prioritizing certain insights over others:

ChatGPT: Tends to pull out overarching themes and narratives. For instance, in a customer survey, it might highlight that “customers think the product is too expensive but high quality.” It often provides a more generalized summary, which is useful for creating a high-level understanding of the data.

Gemini: Extracts themes differently, sometimes prioritizing operational aspects. Using the same dataset, Gemini might identify “the repair process takes too long” as the top theme. This approach can be particularly useful for pinpointing logistical or operational challenges.

Claude: Organizes results into bullet points, often grouping insights by specific attributes. For example, instead of providing a single top theme, Claude might generate a list such as:

- Products perceived as too expensive: Product A, Product B

- Issues with repair time: Product C, Product D

This detailed categorization can be valuable when granular insights are required to address specific areas of concern.

Strengths and Use Cases

ChatGPT: Best for crafting a narrative or summary of customer sentiment and key themes. It excels at producing well-written overviews and is ideal for presentations or reports requiring a topline narrative.

Gemini: Offers a more action-oriented approach by highlighting operational themes that may require immediate attention. It’s particularly useful for businesses looking to address process-related issues.

Claude: Delivers highly structured results that allow for a more analytical, itemized view of the data. It’s a strong choice for dissecting complex datasets with multiple dimensions.

Drawbacks and Limitations

ChatGPT: May overgeneralize themes, potentially missing subtle nuances. It requires careful prompting to ensure themes are prioritized correctly and not overlooked due to a lack of specificity.

Gemini: While prioritizing operational issues can be helpful, it may underemphasize emotional or subjective customer sentiments, leading to a more mechanical interpretation of the data.

Claude: Its bullet-point style and categorization might lack the cohesive narrative some users prefer. Additionally, it can sometimes focus too heavily on granular details, making it harder to see overarching trends.

Finding the Right Fit for Your Needs

When analyzing open-ended survey questions, choosing between ChatGPT, Gemini, and Claude depends on your specific goals:

- Need a high-level narrative for presentations? Go with ChatGPT.

- Need actionable insights into operational challenges? Try Gemini.

- Need a detailed breakdown of specific issues? Claude is your best bet.

AI and Analyzing Open Ended Survey Questions

With the prevalence of LLMs, AI is finding new uses at a rapid rate. Is analyzing open ended survey questions one of them?

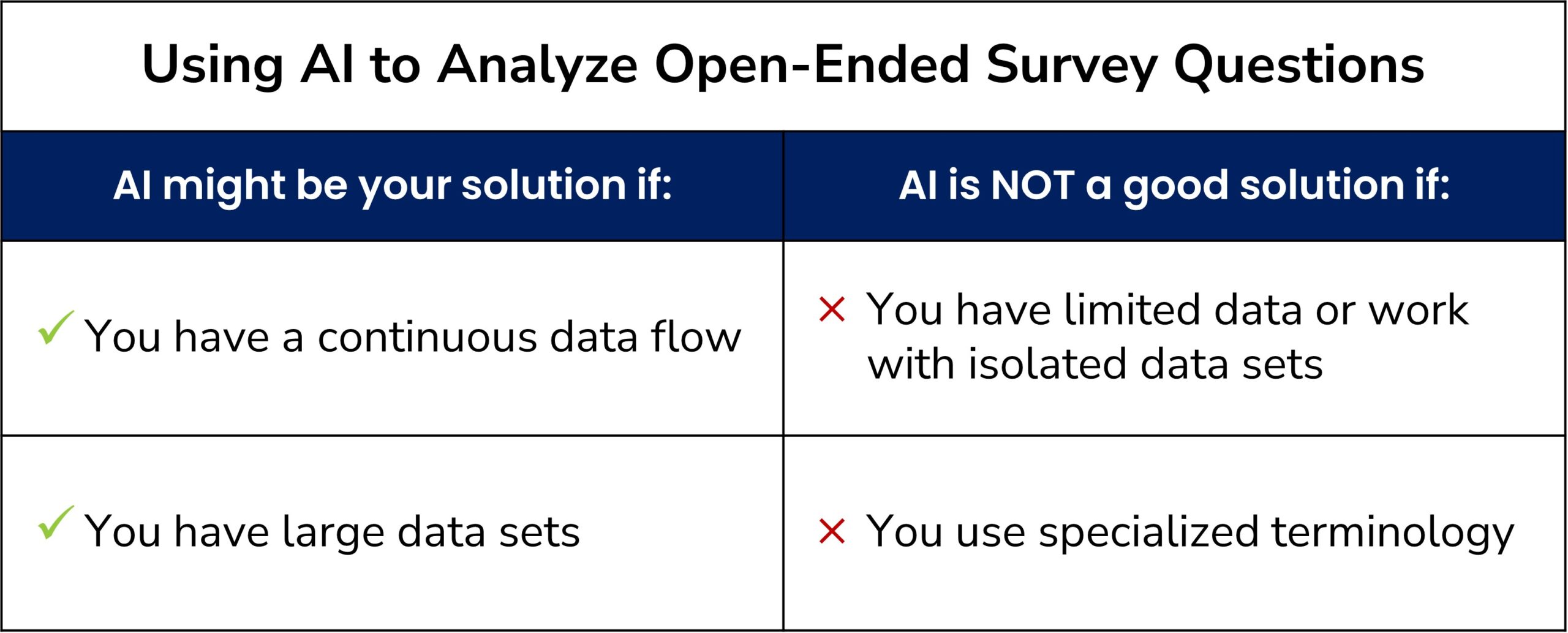

AI might be your solution if:

- You have researchers to set it up.

- Your dataset tends to be large and repetitive.

- You’re willing to audit the results periodically.

AI is NOT a good solution if:

- You have limited data: Midsize or smaller organizations may not have enough data for AI to perform accurately.

- You’re working with isolated datasets: AI isn’t practical for one-time surveys or occasional tracking studies due to the high upfront training costs.

- You use specialized terminology: AI requires extensive training to understand niche-specific terms, acronyms, or codes.

- You have budget constraints: AI-driven text analysis can be expensive; manual tagging by a research team might deliver similar results at a fraction of the cost.

So, is using AI for open ended question analysis a good fit for you? It could be, but you need to know how to use it so you can decide for yourself whether it’s the right choice for your company.

Here’s the truth: All AI-driven text analysis solutions begin with the same five-step process of researchers tagging comments.

Why? Because, for AI to be accurate, it must be trained. Who does the training? A team of researchers. And how do they train the algorithm? By tagging comments using these very same classic social science techniques.

So, it’s not a question of either Text Analysis or AI, because AI starts with Text Analysis. The question is whether your dataset is big enough and uniform enough to train an AI model. Either way, you’ll start with a team of researchers tagging!

Key Takeaways

- Open-ended questions allow your customers to share their thoughts, offering deeper insights than structured questions. They reveal themes you didn’t think to ask about.

- Analyzing open ended survey questions is challenging due to the volume and complexity of data, but Text Analysis offers structured methods to extract actionable insights.

- AI tools like ChatGPT, Gemini, and Claude excel at summarizing data, identifying themes, and speeding up the analysis process—but each has limitations—so you still need human researchers.

- AI might not always be suitable for open ended question analysis unless your dataset is large and continuously updated.

- Manual Text Analysis might be a better fit for smaller datasets, isolated surveys, or specialized terminology.

- When AI is used for analyzing open ended survey questions, it still needs training based on Text Analysis methods.

Text Analysis: A Proven Method

Using Text Analysis to extract the gold in your open-ended survey questions is a proven way to unlock critical business insights.

============================================

Care to discuss Text Analysis? Get in touch!

============================================

The post Analyzing Open Ended Survey Questions—Is AI Your Solution? appeared first on Interaction Metrics.

]]>Read more

The post Customer Experience: Could AI Tools Be Your Solution? appeared first on Interaction Metrics.

]]>Everyone is talking about using AI tools to measure the customer experience. Here we explain when AI companies like Clarabridge and CallMiner are the right fit. We also explain 2 situations when they are NOT the right fit. Check it out!

Or, if you want to read more about AI for customer experience, check out our blog here.

The post Customer Experience: Could AI Tools Be Your Solution? appeared first on Interaction Metrics.

]]>Read more

The post When & Where AI for Customer Experience Fits appeared first on Interaction Metrics.

]]>Updated 10/17/2024: Artificial Intelligence has infiltrated every market, and the customer experience is no exception. While AI for customer experience is nascent, it already shows tremendous value as way to measure experiences, and as a lever to improve them.

AI is a powerful tool.

But all too often, “AI” is slapped onto products without it being clear what that means or what value it adds.

“…for most [machine learning] projects, the buzzword “AI” goes too far. It overly inflates expectations and distracts from the precise way ML will improve business operations,” writes Eric Siegel in the Harvard Business Review.

So, is AI for customer experience just hype? No. But you need to know how to use it so you can decide whether it’s the right fit for your company.

Ask: Will AI capture the nuances of your customers’ experiences?

And ask: Will it account for your customers’ expectations, subconscious reactions, their wide range of sensations and feelings?

And also ask: If you’re in a niche market, is the expense of AI worth it? Can you justify the cost to your CFO? Because, it depends.

Jump to: 5 Scenarios to Consider

1-Minute Overview of AI for Customer Experience

Unstructured Data: When customers give feedback through surveys and in day-to-day conversations with your company, that’s unstructured data. Unstructured data is invaluable for understanding customers’ feelings and thoughts, but only if your analysis accounts for the nuances.

This is so important that it bears repeating: analyzing unstructured data reaps incredible value, with the caveat that you honor the nuances and subtleties inherent in comments and conversations.

The first step in analyzing any large unstructured dataset is called “tagging.”

Whether you’re using AI or a team of researchers to analyze your data, the tagging process is the same – and it requires human input. I’ll explore how to tag further down, but essentially, it’s the process of uncovering themes by building out category definitions and applying those categories to the data.

Does every comment need to be tagged? No. Statistical sampling methodologies will always give you an accurate representation of any population. That’s the purpose of sampling, so you don’t need to analyze every comment or conversation — and it works well.

So where does AI for customer experience come in?

AI provides leverage for categorizing comments when you’re dealing with large datasets.

Then, used properly, AI can extract meaning from your text. LLMs enable sentiment analysis, entity recognition, text classification, and topic modeling. But keep in mind, all AI tools require researchers to train and check the algorithm.

For many companies, adding AI is not required. Especially for B2B companies, AI isn’t always necessary to achieve solid Text Analysis.

The Best Intelligence is About Asking Questions

Because AI IS the topic du jour, it’s vital to keep on top of its powers and limitations. As of this writing, the power of AI is its access to a vast repository of language.

However, again, as of this writing, AI lacks general intelligence, so it’s not intellectually curious, and can’t ask the kinds of questions that dramatically influence our lives.

- Socrates’ curiosity prompted and probed us to self-examine our lives.

- Copernicus’s curiosity led to the heliocentric understanding of the solar system.

- Newton’s curiosity led to the law of universal gravitation.

These examples set a high bar, but the point is clear. Intelligence is NOT simply the accumulation of facts; it’s equally — if not more — about curiosity and asking good questions.

Will AI ever ask paradigm-shifting questions—the kind that change how we understand the world? Maybe. But for now, it’s humans who ask the questions, and it’s our responsibility to ask the best questions we can.

Applying curiosity to the customer experience is often the difference between passing-grade experiences and those that amaze us. There are countless great questions to ask about your company’s customer experience. Here are a few:

- What unstructured data do we have that we’re failing to examine?

- Are we asking our customers disarming questions that get them to open up in honest ways?

- Is it possible that we’ve heard from customers with issues, and didn’t respond?

- Worse yet, might we have processes that discourage customers from giving us honest feedback? (We often see this when companies insist that their surveys be sent from a donotreply email address.)

How to Tell if AI is the Right Fit

Adding AI to Text Analysis can be a significant advantage for some companies that regularly accumulate large datasets of unstructured data – but it doesn’t mean that AI is the solution for analyzing all text-based data. And it certainly does not mean that AI is the only way to achieve text analysis.

I recently heard from a customer experience director of a multi-billion dollar company who had hired a large AI firm to analyze her company’s customer feedback. The AI algorithm required two research teams to train it for her project: five people working for the company itself, and another four people working for the AI company. The CX director raved about the insights that AI had produced from her company’s unstructured data.

She didn’t realize that those same insights could be achieved with a team of researchers and classic tagging and sampling. And in this case, her dataset wasn’t large enough for AI to add value. It wasn’t the AI that provided the insights; it was the nine people training the AI.

AI software salespeople often tell Customer Experience Directors they MUST buy an AI-powered text analytics solution to understand their data. But that just isn’t true.

5 Scenarios To Consider:

- If you are a midsize or smaller organization, it’s unlikely that your dataset is large enough for AI to work profitably. There’s simply not enough material for AI to learn from to tag accurately.

- You collect isolated datasets: AI would not make sense if you were only doing a once-yearly tracking study or a one-time survey, given the upfront costs of training it.

- Your dataset isn’t continuous: AI makes sense if your data are updated continually, for instance, if you are fielding thousands of customer calls daily. But if your data doesn’t come in a constant stream, then the AI model needs to be retrained with each new batch of data.

- You don’t have the budget for AI. The cost for AI-based Text Analysis adds up quickly, so bypassing AI and only working with a research team to do the tagging can achieve the same results at a fraction of the cost.

- You’re a niche company whose customers use many specialized terms that AI won’t understand without thousands of training hours. If your customers use acronyms, codes, and incident numbers, it will be challenging to leverage the power of AI.

In these scenarios, the best way to extract insights reliably is by using a research team adept with tagging techniques — but without adding AI. At best, adding AI would be an unnecessary expense. At worst, it would result in inaccurate analysis.

To summarize, if you have a large, continuous dataset, investing in and training AI makes sense. But if your dataset isn’t large, continuously updated, or involves specialized terminology, then it’s just not worth it.

AI for Customer Experience: How It’s Used

AI is being applied in different ways to improve the customer experience, with varying levels of success. One of the most widespread uses of AI to improve the customer experience is the chatbot, which attempts to mimic a human conversation.

Early chatbots were text-based and provided a limited set of pre-composed answers. Today, AI-driven chatbots use natural language understanding to help users solve problems. Unfortunately, customers often report that chatbots are frustrating, and the technology likely needs to evolve again before we hear from customers that their experiences have improved.

Using AI to measure experiences is also a growing trend, and some companies are using AI for customer experience to write survey questions. But given the uniqueness of each company and its objectives, this can lead to underwhelming data.

Other companies are using AI for customer experience to gauge call center conversations and customers’ answers to open-ended survey questions. Here, the goals are to:

- Assign sentiment scores for each conversation or comment

- Identify emergent themes within a corpus of content

The organizations that tend to benefit from AI platforms have ongoing conversations and surveys that yield massive, fairly consistent datasets.

In this situation, research teams (sometimes called the training team) work with AI to define, guide, set, test, and refine its algorithms. In fact, there are typically two research teams: one working on the client side, the other working directly for the AI company. Often, the research teams comprise a total of 10 to 12 employees or more.

Although AI learns from itself, it also needs training to be accurate enough to generate insights you can genuinely trust — and build your organization around.

Sentiment (how customers feel) is the easiest part of verbatim tagging, but we’ve seen AI algorithms with insufficient training incorrectly tag sentiment more than 70 percent of the time. It takes an investment in teams that know how to tag for that number to go down.

What if you’re a smaller company without that huge dataset? Or what if you are a niche B2B company in which customers and staff use specialized vocabularies?

You still need to understand your customers’ opinions and sentiments, but the cost of partnering with an AI company like Clarabridge can be enormous. More importantly, your analysis and insights won’t be accurate because AI needs a large dataset to train on before it becomes consistent and precise.

Unstructured Data: Your Treasure Trove

So, how exactly are customers’ opinions gathered and analyzed?

When companies record calls or collect open-ended customer feedback, they end up with data outside of “yes/no” answers or numeric rankings. Instead, the data includes customers’ feedback in the form of spoken or written sentences like:

- “The product was great, but the wait time took forever!”

- “The staffer on the phone was so rude that I’m never shopping with you again.”

Sadly, many companies don’t know what to do with their unstructured data, so they never bother to analyze it, or they use cheap, out-of-the-box software for “insights.”

While the vast majority of meaningful data is unstructured, a recent article in MIT Management reports that only 18 percent of companies analyze it.

Unstructured data is a treasure trove of customers’ thoughts on your company, but only if you can analyze and quantify its meaning in an unbiased way. One of our specialties at Interaction Metrics is rigorous Text Analysis – where we glean objective, measurable insights from unstructured data.

Tagging Explained

Tagging is the first step in Text Analysis. It’s the method by which unstructured data is categorized and quantified to reveal meaningful insights. Several analysts work together to build a coding framework iteratively, going through multiple cross-checks. Then, that framework is used to classify comments by their various elements in descending order of specificity.

Like a taxonomy chart that starts with ‘kingdom’ and ends with ‘species,’ a coding framework starts broadly and narrows into specific categories. Tagging typically follows a process like this:

- Hypothesize: Examine a portion of the comments to understand their meaning and develop an initial tagging framework.

- Iterate: Iterate the tagging framework until it accurately captures the text’s meaning.

- Define: Build out tag definitions with examples to ensure tags are assigned objectively.

- Cross-check: When multiple analysts assign the same tags, the system is replicable.

- Quantify: Add statistical functions to quantify tags and analyze the data.

This tagging process is always the first step toward extracting measurable insights from unstructured data.

The advent of AI and machine learning means tagging can be done on a massive scale with enormous datasets.

However, it’s important to remember that even when AI and machine learning are involved, a team of researchers is still required to establish and test the coding framework and ensure it is tagging both the sentiment and theme correctly. The presence of AI doesn’t negate the need for a team of researchers; it just augments their work.

And whether it’s AI plus a research team or a team of researchers working with the tools of classic social science, the tagging process remains the same: qualitative data is labeled in order to be quantified.

Sampling: A Classic Statistical Method

Not every piece of data needs to be tagged to achieve accurate insights, thanks to statistical sampling techniques.

Statistical sampling of a population is the process of selecting a subset of individuals or items from a larger population to make inferences or draw conclusions about the entire population.

Within any given population, there are natural limits to diversity. Once a population is defined, then roughly 370 individuals randomly selected within that population will represent the rest of the population quite accurately. Think of the ripples on a pond’s surface: you don’t need to measure the height of every single ripple if you can capture a random subset of ripples.

Sampling is critical for Text Analysis. Because doing it correctly is essential to many customer experience initiatives, I’ll describe it in more detail in upcoming blogs.

Tools in the AI Toolbox

AI for customer experience might be limited, and it may not be the best match for your niche or B2B company, but that does not mean that AI is useless in the B2B customer experience setting. Far from it. There are lots of simple AI-driven tools that will save you loads of time every day. At Interaction Metrics, in addition to large AI platforms, here are just a few of those simple AI tools that we find useful:

- Sonix.ai, is an inexpensive automated transcription service. It’s much more accurate than most free transcript tools and can add bullet points to summarize conversations. However, it’s not useful if you need to rigorously evaluate a conversation and assign a score. That’s because so much of what’s ‘said’ is not said or expressed through tone and conversational pauses. AI still isn’t able to account for these nuances and subtleties.

- Gemini and ChatGPT4 can help accumulate information when writing. Of course, that information needs to be thoroughly fact-checked, but these tools can offer a starting point for content. Beware never to ask these tools to source direct quotes. They can spit out made-up quotes and false attributions, and you’ll find yourself in nonsense land with “quotes” from Gandhi on machine learning and Lincoln on email!

- Canva’s Magic Edit and Magic Eraser allow users to transform images and remove objects from pictures easily.

- And Grammarly, along with Microsoft tools, are an essential tool for correcting punctuation, spelling, and grammatical errors. Every email, every blog post, and every piece of writing that the consumer reads should be run through editing tools before they’re published.

The bottom line is that AI is a tool, and tools need to fit the needs of the user — not the other way around. AI can certainly be the right solution for your company if it’s designed specifically for your industry. That means it’s guided by human researchers who are experienced in your specific field.

The Right Tool for the Job

At Interaction Metrics, our rigorous Text Analysis supports two main use cases:

- You need a research team to train and quality-check your AI platform.

- You have a smaller or standalone dataset, or one full of technical terms, and partnering with an AI platform is cost prohibitive.

There’s no need to buy a backhoe when a spade can do the job better.

At Interaction Metrics, we know how to measure and analyze both conversations and comments. And we know when and where to apply AI profitably.

So, if you’re using AI and need help calibrating it OR have a dataset that doesn’t lend itself to AI, reach out!

Jump back to 5 Scenarios to Consider

Toward making sense of all that unstructured data that gives us our beautiful and strange world!

Want to save money with AI? Get in touch.

The post When & Where AI for Customer Experience Fits appeared first on Interaction Metrics.

]]>Read more

The post Not Another Word Cloud—Please! appeared first on Interaction Metrics.

]]>Now that you’ve asked the Net Promoter question, what should you do with the follow-up question? Are you considering simply reading the comments, or using a word cloud to pull out major themes?

Geoff Colvin writes in ‘The Simple Metric That’s Taking Over Big Business’ (May 2020, Fortune magazine) the star of Net Promoter is its follow-up question, usually stated as something like ‘How could we improve? Or, ‘Why did you give that score?’

Colvin cites Marc Stein, Senior VP at Dell Technologies, who says, “The real gem and actionable insights (from the Net Promoter question) come from the verbatim transcripts.” And Deborah Campbell, VP of insights at Verizon, adds, “It’s not about chasing the number (NPS), it’s about understanding what our customers want and need from us.”

Unfortunately, I’ve seen many companies throw their verbatims out, do a word cloud, or let their verbatims accumulate for years because they didn’t have a scientific way to analyze them.

When it comes to verbatims, the root of the problem is that in the same way customer experiences are varied and complex, comments are messy and unpredictable.

Comments can be brief or lengthy, vague or technical, general or specific. Some comments stay on-topic; others trail off. And of course, customers refer to similar issues in different ways.

And this applies not just to survey comments but other sources of customer data like reviews, chats, interviews—all of which can give insight into why customers feel, think, and rate the way they do. While unstructured data like this may appear to defy quantification, that’s not actually the case.

All data can and should be quantified because without quantification, you only have anecdotes. Not only are anecdotes not actionable, they can be misleading.

AI-based Text Analytics

So what do you do with the verbatims? Well, if you are a sizable consumer company with a large, consistent data set to match, then AI-based Text Analytics are at least part of your solution.

However, if you’re a small or mid-sized company, budget could be an issue, but the deeper issue is that it’s unlikely you’ll have enough verbatims for AI to learn from—self-learning being the hallmark of good AI. And if you’re a B2B company, your customers may be using specialized phrasing covering widely divergent topics, a level of nuance that tends to stump AI.

In these cases, applying research intelligence to your verbatims using classic social science techniques is the only reliable way to extract insights—more about this in a minute. First, here’s a quick rundown of non-solutions that companies often employ to deal with their verbatims.

Machine Assigned Sentiment Scores

Much like the word cloud, these are inexpensive tools that analyze verbatim sentiment, but what you’re really getting is a re-rendering of your Net Promoter Score. Customers who give you a Net Promoter rating of 0-2 are generally angry while those who you rate you a 10 are impressed, but so what? You still don’t know how to improve. Besides, for complex comments, there are cases in which machine-based sentiment scoring is more often wrong than right.

Thumbs Up, Thumbs Down

Some companies simply end their survey findings report with a few examples of thumbs up and thumb down comments or a word cloud to summarize customers’ verbatims.

About those Ubiquitous Word Clouds

The reason many companies turn to word clouds is they are a default chart for survey platforms. Even if you’re not using a platform, you can upload your verbatims for free and get a representation of your verbatims like this:

But what does this tell you? Well, for one that customers mention “Alice” more often than ‘dormouse;’ however, do they see Alice in a positive or negative light? And if you want dormouse to make more of an impression, how would you do that?

Then There’s the Reading Method

In the same Fortune article cited above, California Closets CEO Bill Barton explains, “he starts his day reading the previous day’s verbatims because it’s grounding.” While reading verbatims is important, the problem is that the brain’s working memory starts cutting off after seven items. So even if you read thousands of comments, you simply can’t remember and synthesize all that information in a quantifiable way.

Beyond the Word Cloud: Research Intelligence

But if word clouds and reading don’t provide actions and insights, what’s the best approach? Again, for companies with large, consistent data sets, AI should be a strong consideration for at least part of the solution. But even if you are implementing AI, you still need Research Analysts to establish and test the initial coding framework. And ideally, analysts continue to monitor your AI to ensure you are getting accurate, updated analysis.

Research Intelligence Unpacked

Since the beginning of social science, anthropologists, psychologists, and sociologists have been coding narrative data. For many companies, coding remains the most effective way to extract meaning from their survey verbatims and their other unstructured data like interviews and reviews. The best term I’ve seen to describe this activity is research intelligence.

With Research intelligence, multiple analysts work together to build a coding framework. The framework is built iteratively, going through multiple cross-checks. Then, that framework is used to classify comments by their various elements in descending order of specificity.

Like a taxonomy chart that starts with ‘kingdom’ and ends with ‘species,’ a coding framework starts broadly and narrows into specific categories.

Theme Quantification

Once all the coding is in place, it’s important to quantify the codes to reveal themes in rank order priority. Quantification is a crucial step because now you can correlate your verbatims’ themes with Net Promoter and other outcome scores.

By quantifying your themes, you learn how customers think. Moreover, you learn how to boost loyalty and every other KPI critical to your organization.

A Typical Customer Comment

Here’s a typical comment that’s been edited to protect client confidentiality. But just like most customer comments, the writing is choppy and fails the Hemingway test.

Coding

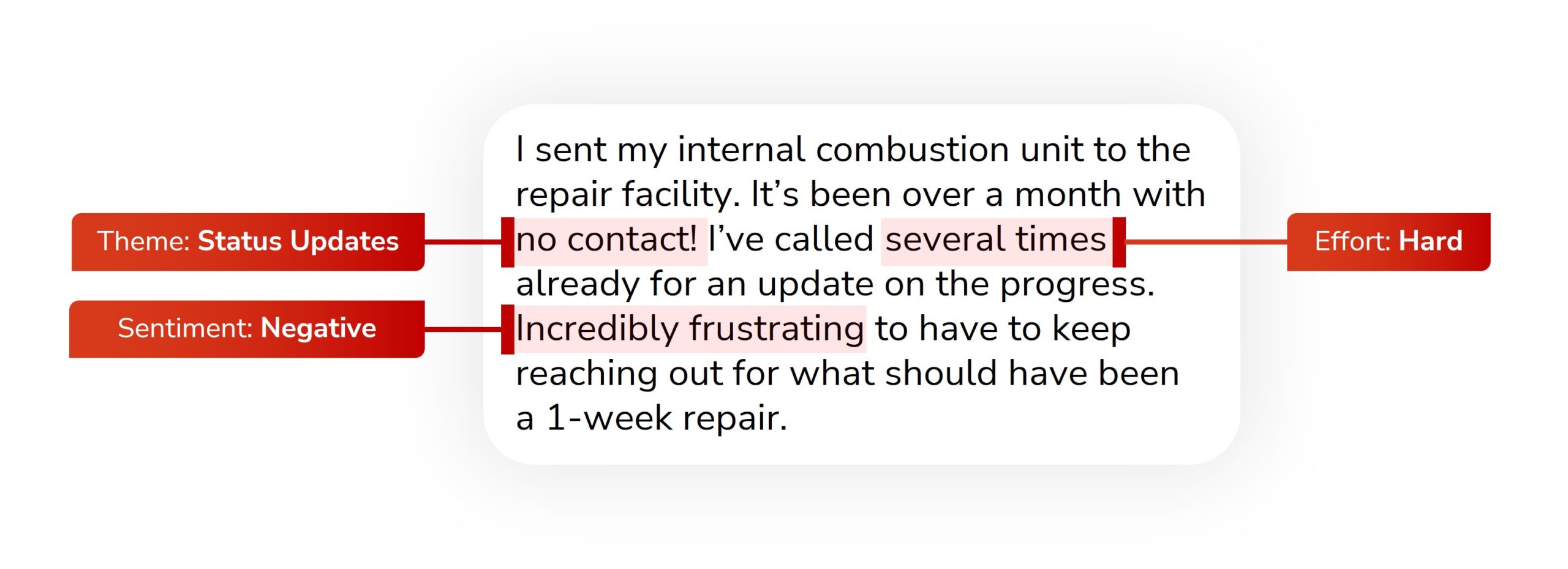

What follows is a breakdown of how to code the above comment. For accurate coding, every client must have its own codes and classifications systems, but in a general way, the classification that follows applies to many kinds of companies.

- Substantiveness: The top level of classification, substantiveness, refers to whether the comment is intelligible enough to be coded objectively. The comment above is substantive; the customer has a point that can be understood.

- Class: This identifies which department the comment belongs to. Here, the comment is about a repairs experience and gets directed to the repairs team.

- Type: This shows whether the customer is voicing a complaint or explaining why they want to keep things the way they are. In this case, the verbatim is marked ‘improve.’

- Sentiment: This is always the easiest category for researchers to determine. But because this comment starts off by doubling down favorably, it could throw some AI-based text analytics off. Yet researchers quickly understand that while this customer is usually impressed, this time, they’re frustrated.

- Tag: This is the most important part of the entire classification system because it’s where the researcher dials in on what the comment is about. In this instance, the comment is about status updates. However, keep in mind that tags need the rest of the classification system in order for them to have actionable meaning.

- Sub & Product Tags: These are used to clarify which products or services the comment is about. In this case, the waterways internal combustion unit refers to a part of the client’s HVAC engine line.

The Team Removes Subjectivity

Using a team of Analysts when coding is critical because when multiple analysts examine the data independently and arrive at the same codes, you have a replicable system in which subjectivity has been removed. Ensuring this kind of objectivity is a crucial pillar of science and is what makes for good research.

Seth Godin, Close the Loop

Through verbatim analyses, we’ve learned about training issues that were imminently solvable, although not a quick fix. Other issues can be solved in short order; for example, you might learn that you need to apologize to a customer for an overdue report, and email it pronto.

Whether it’s a quick fix or not, verbatims identify gaps; and when acted upon, verbatim analysis closes those gaps. As Seth Godin has said: “If you’re not going to read the answers and take action, why are you asking?”

But having a closed-loop system where you learn about customers’ issues and then resolve them is not the only reason to have a verbatims discipline.

Two Types of Insights

Close-ended rating questions like ‘how satisfied are you,’ or ‘how likely are you to recommend us to a friend’ are limiting because they only tell you how customers feel within the limitations of the questions you thought to ask. Customer verbatims, on the other hand, are a window into customers’ thoughts.

With verbatims, customers speak for themselves, so you learn about their expectations versus their perceptions. And you also learn how well your survey matches the customer experience.

Identifying gaps between your survey and the customer experience gives you two kinds of insights, the first about your survey, the second having broad application for your entire company.

#1 Improve Your Survey:

For example, if customers consistently mention issues that are not addressed in your survey’s rating questions e.g., documentation quality, then those topics can be turned into rating questions to improve the usefulness of future surveys.

#2 Improve Your Company:

Other times, there are instances where you learn about a language disconnect between you and your customers. For instance, customers might comment about missing inventory while you might describe this as a supply chain issue.

Recognizing this disconnect gives you the opportunity to adjust your word choice to match the words customers use.

This clarifies not only your survey but also your websites, instruction manuals, and every other kind of direction and description you use.

Thanks Geoff Colvin!

If I had one thing for you to takeaway, it’s that customers’ thoughts are messy and complex, but that’s exactly why they’re interesting and valuable! Thanks, Geoff Colvin, for clarifying it’s the follow-up question that makes Net Promoter sing. The verbatim responses it elicits are one of your most valuable data assets — that is, if you rise above the word cloud! To learn more about verbatim analysis go here.

The post Not Another Word Cloud—Please! appeared first on Interaction Metrics.

]]>